AI: Media and Information Quality

Led by extensive data collection and enormous investments in machine learning, a handful of companies increasingly find themselves able to algorithmically influence billions of users in ways both powerful and subtle. As media, journalism, and information quality play a central role in promoting healthy democratic societies, a reliance on digital platforms that are themselves increasingly reliant on AI to select the content we see has raised significant concerns about the role that autonomous systems are playing in influencing human judgment, opinions, perceptions, and even election outcomes. Addressing these challenges requires grappling with difficult governance questions pitting free speech against government or private party regulation. It also raises significant questions about the role of transparency in these influential algorithms and whether such transparency may actually create opportunities for solutions that enable a competitive market for news-feed algorithms, customized to the needs of each user.

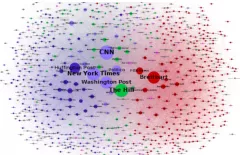

We are examining this accelerating shift from a state-citizen relationship to a platform-consumer one by conducting new empirical research about the effects of autonomous systems on the production, dissemination, and consumption of media across these platforms. Additionally, through innovative partnerships, we are developing tools that help both users and platforms better respond to these challenges. The cornerstones of this work are the Harmful Speech and Media Cloud projects, through which we work to increase understanding of the mechanisms by which media manipulation occurs, track its prevalence within media ecosystems, and assess the potential for alternative interventions in order to evaluate the impact of these technologies on societal attitudes and democratic institutions. With our work in this area, we are simultaneously furthering our understanding of the impact of AI on the media and deepening our partnerships with the private sector to effectively respond to this impact.