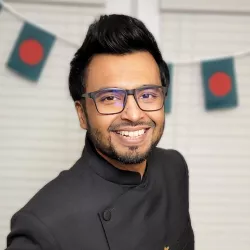

Upol Ehsan makes AI systems explainable and responsible so that people who aren't at the table do not end up on the menu.

He is a Doctoral Candidate at Georgia Tech and an affiliate at Data & Society. His work has pioneered the field of Human-centered Explainable AI (HCXAI), receiving awards at top-tier venues like ACM CHI, HCII, and AAAI AIES. At BKC, his project-- the Algorithmic Imprint-- will expand AI accountability and address an intellectual blind spot in Responsible AI by focusing on the unexplored area of harms in the AI’s afterlife (post-decommissioning).